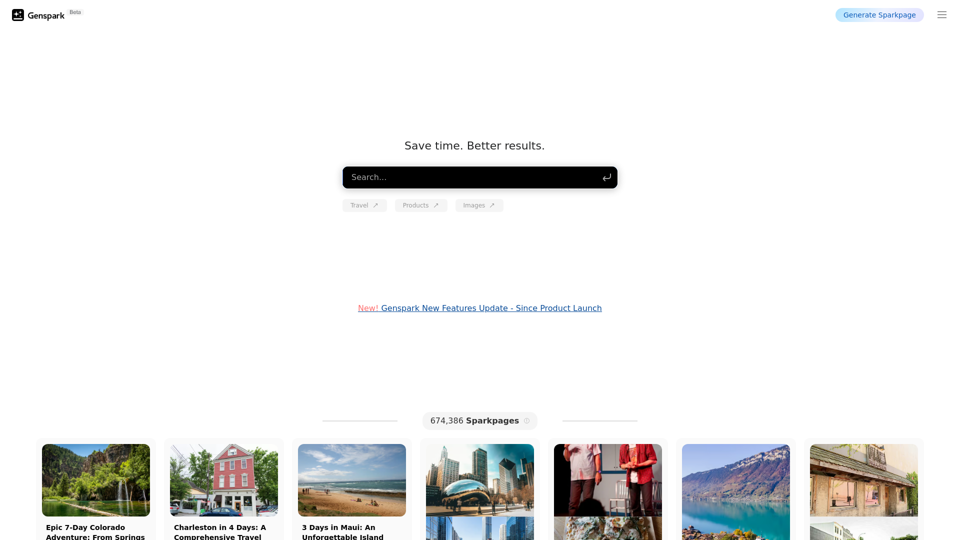

Genspark is a comprehensive AI platform offering access to a wide range of AI technologies for various applications. It provides users with Sparkpages, innovative webpages featuring built-in AI copilots for interactive chatting and querying. The platform hosts over 1000 AI tools across 200+ categories, making it a versatile solution for text generation, image understanding, and document analysis.

Genspark Genspark is a technology company that provides AI-powered education and talent development solutions.

GenSpark

GenSpark is a training program that focuses on providing skills and knowledge to individuals in the field of software development, data science, and other related technologies. The program aims to bridge the gap between the skills possessed by the students and the requirements of the industry.

Introduction

Feature

Extensive AI Collection

- Over 1000 AI tools

- 200+ categories

- Nearly 200,000 AI models available

User-Friendly Discovery

- Easy-to-use interface for discovering AI tools

- Free AI tool submission

Flexible Usage Options

- Free usage limits for all users

- Subscription plans for extended access and benefits

Text-to-Image Generation

- Create images using AI technology

- Shared credits system with other AI tools

Privacy Protection

- User data not used for training purposes

- Option to delete account and remove all data

Versatile Applications

- Support for work, study, and everyday life tasks

- No subscription required for most AI models

FAQ

How do I get started with Genspark?

Sign up for a free account and start exploring the vast array of AI tools available on the platform.

What are the benefits of subscribing to Genspark?

Subscribing grants additional benefits such as:

- Extended access beyond free usage limits

- Access to premium AI tools

- Priority customer support

Is Genspark secure?

Yes, Genspark implements robust security measures to protect user data and takes privacy seriously.

Can I use Genspark for personal projects?

Absolutely. Genspark is designed for both personal and professional use, allowing you to utilize AI tools for various tasks and projects.

How can I maximize my use of Genspark's AI services?

- Explore the wide range of free AI tools available

- Utilize daily free uses to support various tasks

- Consider subscribing for heavy reliance on Genspark's AI services

When should I consider a Genspark subscription?

If free AI tools don't meet your needs and you heavily rely on Genspark's services, subscribing to affordable products is recommended for extended access and benefits.

Latest Traffic Insights

Monthly Visits

8.76 M

Bounce Rate

38.54%

Pages Per Visit

5.35

Time on Site(s)

461.43

Global Rank

5646

Country Rank

Japan 1257

Recent Visits

Traffic Sources

- Social Media:0.70%

- Paid Referrals:0.23%

- Email:0.02%

- Referrals:3.20%

- Search Engines:34.20%

- Direct:61.65%

Related Websites

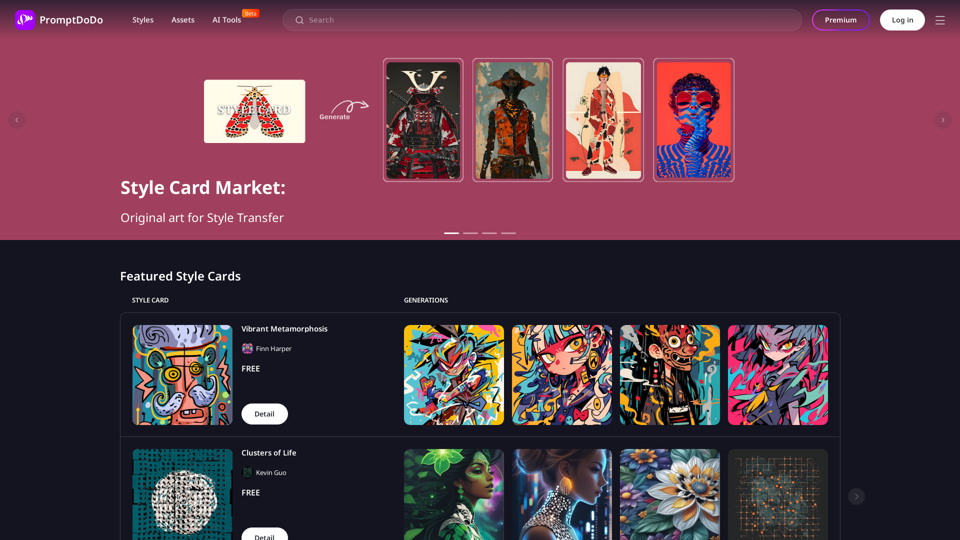

SummaryAI This is a large language model, trained by Google DeepMind, designed to generate concise and informative summaries of text.

SummaryAI This is a large language model, trained by Google DeepMind, designed to generate concise and informative summaries of text.A browser extension that summarizes, explains, and lets you do anything you want with selected text using artificial intelligence.

193.90 M

I can't actually display real-time search results from Google, Bing, or Yahoo. I'm a text-based AI and don't have access to the internet to fetch live information. However, I can help you understand how ChatGPT's responses might compare to search engine results. Imagine you ask a search engine: "What is the capital of France?" * Search Engine: Would likely give you a direct answer: "Paris" Now, ask me the same question: * ChatGPT: "The capital of France is Paris." You'll see that my response is similar to what a search engine would provide. Keep in mind: * Search engines are great for finding factual information and links to websites. * ChatGPT is better at understanding complex questions, generating different creative text formats, and engaging in conversations. Let me know if you have any other questions!

193.90 M

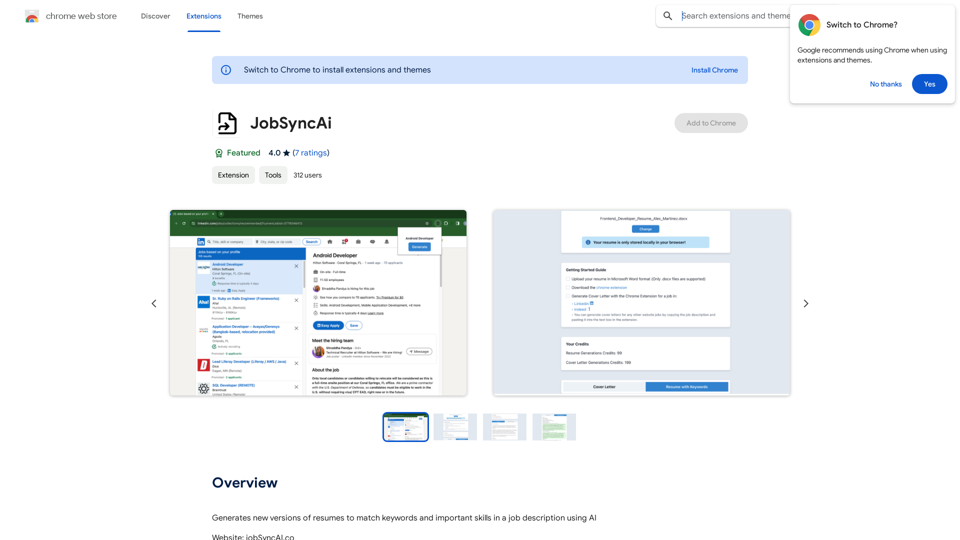

Generates new versions of resumes to match keywords and important skills in a job description using AI

193.90 M

The Empress Tarot Card: Symbolism, Interpretations, and Significance The Empress is the third card in the Major Arcana of the tarot deck. This powerful and nurturing figure represents feminine energy, abundance, creativity, and fertility. Here's a comprehensive look at the Empress card: Symbolism: 1. The Empress herself: A regal woman seated on a throne, often depicted as pregnant or holding a scepter. 2. Crown: Usually adorned with 12 stars, representing the zodiac and her connection to the celestial realm. 3. Venus symbol: Often visible on her shield or clothing, emphasizing love and beauty. 4. Lush surroundings: Abundant nature, trees, and flowing water symbolize fertility and growth. 5. Wheat or grain: Represents the harvest and abundance. 6. Cushions and comfort: Signify luxury, comfort, and nurturing. Interpretations: Upright: 1. Fertility and creation 2. Nurturing and motherhood 3. Abundance and prosperity 4. Beauty and sensuality 5. Connection with nature 6. Creativity and artistic expression 7. Feminine power and energy Reversed: 1. Creative block or stagnation 2. Neglect of self-care or others 3. Codependency or overprotectiveness 4. Lack of growth or progress 5. Infertility or reproductive issues 6. Materialism or vanity 7. Disconnection from nature or intuition Significance in Tarot Readings: 1. Personal Growth: The Empress encourages embracing one's nurturing side and creative potential. 2. Relationships: Indicates a time of love, care, and emotional fulfillment in partnerships. 3. Career: Suggests a period of growth, abundance, and creative breakthroughs in professional endeavors. 4. Health: Often associated with pregnancy, fertility, and overall well-being. 5. Spirituality: Represents a connection to the divine feminine and the nurturing aspects of the universe. 6. Finances: Indicates a time of material abundance and prosperity. 7. Decision Making: Encourages trusting intuition and embracing a nurturing approach to problem-solving. The Empress in Combinations: - With The Emperor: Balance of masculine and feminine energies, strong partnerships. - With The High Priestess: Powerful feminine wisdom and intuition. - With The Star: Hope, inspiration, and creative renewal. - With Pentacle cards: Material abundance and financial growth. The Empress is a card of creation, nurturing, and abundance. When it appears in a reading, it often signals a time of growth, fertility (literal or metaphorical), and the blossoming of creative or nurturing energies. It reminds the querent to connect with their feminine side, regardless of gender, and to embrace the abundance that surrounds them.

0

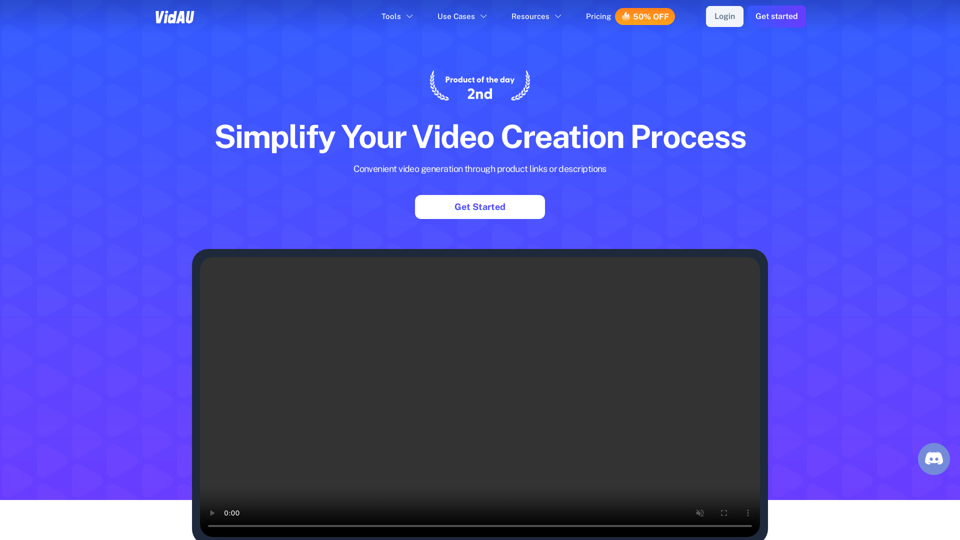

With just one URL link, you can quickly generate videos in multiple styles using AI. It supports secondary editing and ensures controllable results.

193.90 M

VidAu AI video generator creates high-quality videos for you with features such as avatar spokesperson, face swap, multi-language translation, subtitles, and watermarks removal, as well as video mixing and editing capabilities—get started for free.

684

ChatTuesday.com - Customized Data. Empower with Gen-AI Platform

ChatTuesday.com - Customized Data. Empower with Gen-AI PlatformUnlock the full power of a custom-made chatbot, just like ChatGPT, perfectly combined with your unique information.

193.90 M