PromptFolder is a Chrome extension designed to streamline the management of prompts for AI tools like ChatGPT and Midjourney. It offers a comprehensive solution for saving, sharing, and discovering prompts, enhancing user productivity and creativity in AI interactions. With features like prompt organization, customization, and community sharing, PromptFolder caters to both individual users and collaborative teams seeking to optimize their AI prompt workflows.

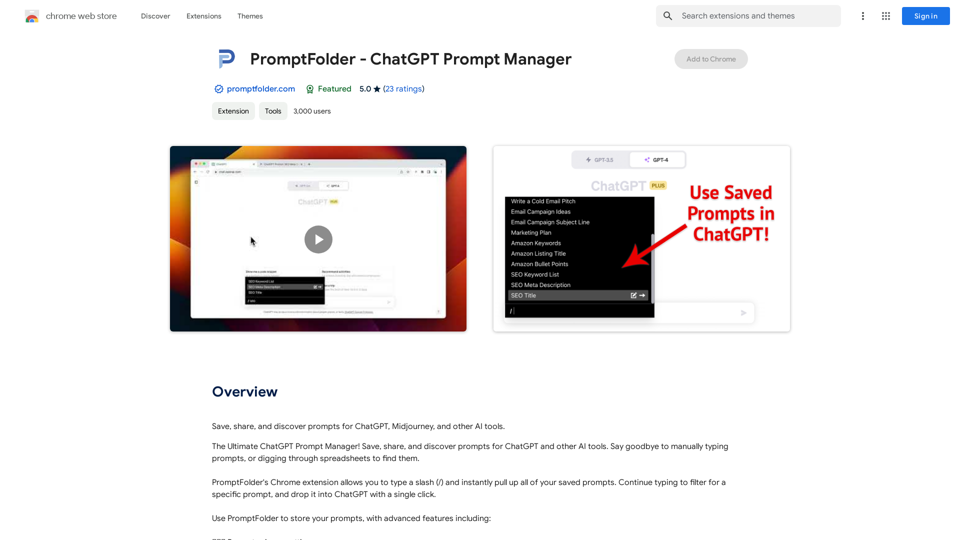

PromptFolder - ChatGPT Prompt Manager

Save, share, and discover prompts for ChatGPT, Midjourney, and other AI tools.

Introduction

Feature

Efficient Prompt Management

- Save prompts for future use

- Organize prompts into folders

- Search functionality for quick prompt retrieval

Seamless Integration

- Type "/" to instantly access saved prompts

- Filter and drop prompts into ChatGPT with a single click

- Compatible with various AI tools (ChatGPT, Midjourney, etc.)

Customization and Flexibility

- Use variables and comments to customize prompts

- Access prompts from any device

Community and Collaboration

- Share prompts with others

- Discover new prompts from the community

- Explore community section for ideas and inspiration

User-Friendly Interface

- Chrome extension for easy access

- Intuitive prompt organization system

Versatile Pricing Options

- Free version with limited features

- Paid subscription with additional features and benefits

FAQ

How does PromptFolder enhance productivity?

PromptFolder boosts productivity by:

- Saving time on manual prompt typing

- Providing quick access to a library of prompts

- Reducing errors through customization features

- Facilitating collaboration and idea sharing

What are some best practices for using PromptFolder?

Tips for maximizing PromptFolder's potential:

- Utilize folders for organized prompt management

- Leverage variables and comments for prompt customization

- Actively share and collaborate on prompts

- Explore the community section for new ideas

- Use the search function for efficient prompt retrieval

Is PromptFolder suitable for team use?

Yes, PromptFolder supports team collaboration by:

- Allowing prompt sharing among team members

- Providing a platform for discovering and adapting shared prompts

- Enabling consistent prompt usage across the team

Latest Traffic Insights

Monthly Visits

193.90 M

Bounce Rate

56.27%

Pages Per Visit

2.71

Time on Site(s)

115.91

Global Rank

-

Country Rank

-

Recent Visits

Traffic Sources

- Social Media:0.48%

- Paid Referrals:0.55%

- Email:0.15%

- Referrals:12.81%

- Search Engines:16.21%

- Direct:69.81%

Related Websites

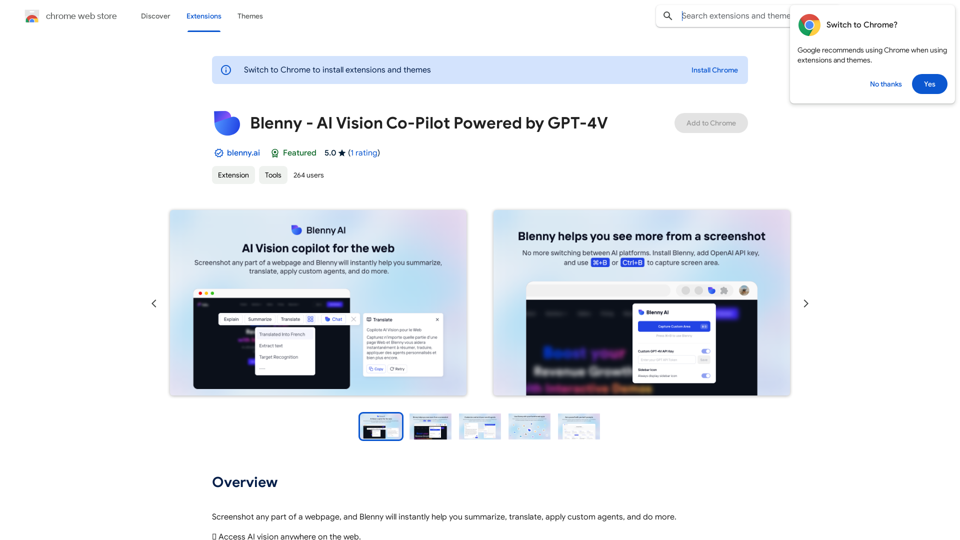

Screenshot any part of a webpage, and Blenny will instantly help you summarize, translate, apply custom agents, and do more.

193.90 M

Ask AI Browser Extension ========================== Description The Ask AI browser extension is a cutting-edge tool that revolutionizes the way you interact with the internet. This innovative extension harnesses the power of artificial intelligence to provide you with instant answers, suggestions, and insights as you browse the web. Features * Instant Answers: Get quick answers to your questions without leaving the current webpage. * Smart Suggestions: Receive relevant suggestions based on your browsing history and preferences. * AI-driven Insights: Uncover hidden gems and interesting facts about the topics you're interested in. * Personalized Experience: Enjoy a tailored browsing experience that adapts to your needs and preferences. How it Works 1. Install the Extension: Add the Ask AI browser extension to your favorite browser. 2. Ask Your Question: Type your question in the search bar or highlight a phrase on a webpage. 3. Get Instant Answers: Receive accurate and relevant answers, suggestions, and insights in real-time. Benefits * Save Time: Get instant answers and reduce your search time. * Enhance Productivity: Stay focused on your tasks with relevant suggestions and insights. * Improve Knowledge: Expand your knowledge with interesting facts and hidden gems. Get Started Download the Ask AI browser extension today and experience the future of browsing!

193.90 M

Use an AI like ChatGPT to condense and translate articles into short, ready-to-publish paragraphs directly on the webpage.

193.90 M

SafeGPT =============== SafeGPT is an AI model designed to generate human-like text while avoiding harmful or toxic content. It is trained on a massive dataset of text from the internet and can understand and respond to user input in a conversational manner. SafeGPT is capable of generating text on a wide range of topics, from simple questions to complex discussions, and can even create stories, dialogues, and more. Key Features: * Harmless responses: SafeGPT is designed to avoid generating harmful or toxic content, making it a safe and reliable tool for users of all ages. * Conversational understanding: SafeGPT can understand and respond to user input in a conversational manner, making it feel more like a human-like interaction. * Creative freedom: SafeGPT can generate text on a wide range of topics, from simple questions to complex discussions, and can even create stories, dialogues, and more. * Continuous learning: SafeGPT is constantly learning and improving its responses based on user feedback, ensuring that it becomes more accurate and helpful over time. Use Cases: * Chatbots and virtual assistants: SafeGPT can be used to power chatbots and virtual assistants, providing users with a safe and reliable way to interact with machines. * Content generation: SafeGPT can be used to generate content for websites, social media, and other platforms, helping to reduce the workload of content creators. * Language learning: SafeGPT can be used to help language learners practice their conversational skills, providing them with a safe and interactive way to improve their language abilities. Benefits: * Improved safety: SafeGPT's ability to avoid generating harmful or toxic content makes it a safer tool for users of all ages. * Increased creativity: SafeGPT's ability to generate text on a wide range of topics and in various styles makes it a valuable tool for content creators and language learners. * Enhanced user experience: SafeGPT's conversational understanding and ability to respond in a human-like manner make it a more enjoyable and interactive tool for users.

SafeGPT =============== SafeGPT is an AI model designed to generate human-like text while avoiding harmful or toxic content. It is trained on a massive dataset of text from the internet and can understand and respond to user input in a conversational manner. SafeGPT is capable of generating text on a wide range of topics, from simple questions to complex discussions, and can even create stories, dialogues, and more. Key Features: * Harmless responses: SafeGPT is designed to avoid generating harmful or toxic content, making it a safe and reliable tool for users of all ages. * Conversational understanding: SafeGPT can understand and respond to user input in a conversational manner, making it feel more like a human-like interaction. * Creative freedom: SafeGPT can generate text on a wide range of topics, from simple questions to complex discussions, and can even create stories, dialogues, and more. * Continuous learning: SafeGPT is constantly learning and improving its responses based on user feedback, ensuring that it becomes more accurate and helpful over time. Use Cases: * Chatbots and virtual assistants: SafeGPT can be used to power chatbots and virtual assistants, providing users with a safe and reliable way to interact with machines. * Content generation: SafeGPT can be used to generate content for websites, social media, and other platforms, helping to reduce the workload of content creators. * Language learning: SafeGPT can be used to help language learners practice their conversational skills, providing them with a safe and interactive way to improve their language abilities. Benefits: * Improved safety: SafeGPT's ability to avoid generating harmful or toxic content makes it a safer tool for users of all ages. * Increased creativity: SafeGPT's ability to generate text on a wide range of topics and in various styles makes it a valuable tool for content creators and language learners. * Enhanced user experience: SafeGPT's conversational understanding and ability to respond in a human-like manner make it a more enjoyable and interactive tool for users.Safe Web Co-pilot

193.90 M